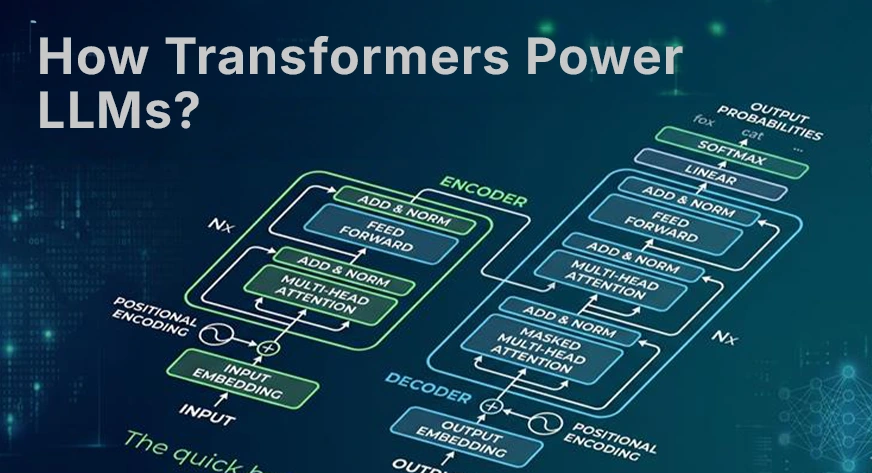

Transformers power modern NLP systems, replacing earlier RNN and LSTM approaches. Their ability to process all words in parallel enables efficient and scalable language modeling, forming the backbone of models like GPT and Gemini.

In this article, we break down how Transformers work, starting from text representation to self-attention, multi-head attention, and the full Transformer block, showing ...

Analyzing the article from a skeptical perspective, several manipulation patterns can be detected:

Distortion: The article presents the advancements of Transformers in a positive light, emphasizing their efficiency and scalability without discussing potential drawbacks or ethical concerns. This could be seen as an example of semantic manipulation, as the full picture is not being presented. (ARC-0024 Ambiguity)

Emotional exploitation: While not directly present in this article, emotional exploit...