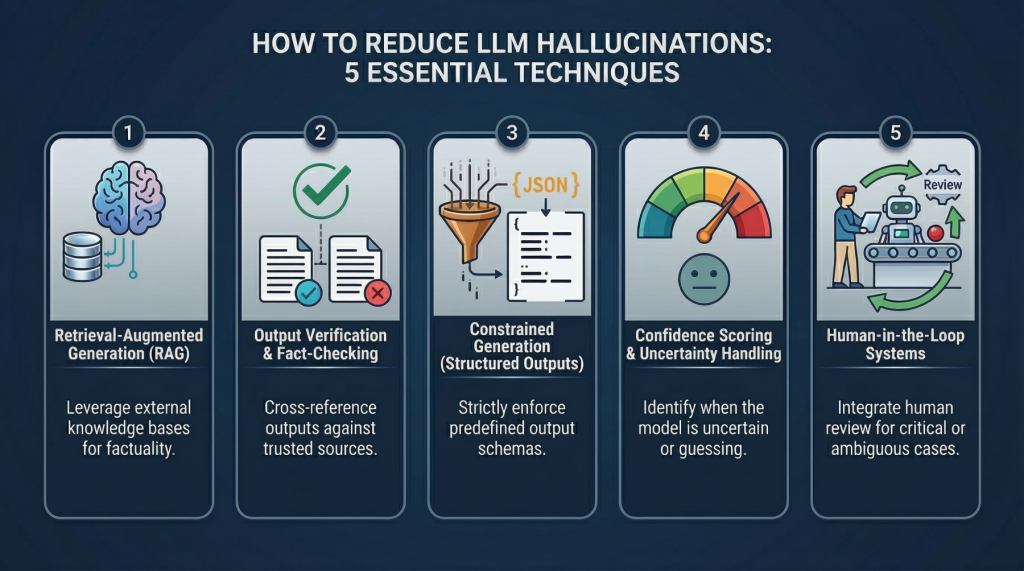

In this article, you will learn why large language model hallucinations happen and how to reduce them using system-level techniques that go beyond prompt engineering.

Topics we will cover include:

- What causes hallucinations in large language models.

- Five practical techniques for detecting and mitigating hallucinated outputs in production systems.

- How to implement these techniques with simple...

The article highlights the ongoing efforts to enhance the reliability of AI systems by detecting and verifying information. This shift from blind trust to detection reflects a growing recognition that AI, like any technology, must be approached with caution and critical thinking. The strategies outlined in the article aim to create more robust AI systems that can better navigate the complexities of generating responses based on probabilities rather than verified facts.

Patterns detected: ARC-004...