Introduction

Over the past year, large language models have rapidly expanded in both scale and capability. Frontier models such as Kimi K2.5, GLM 5, and Qwen 3.5 now operate with hundreds of billions of parameters and context windows stretching to millions of tokens, enabling long-context reasoning, agentic workflows, and complex tool use. As these models grow more capable, efficient inference has...

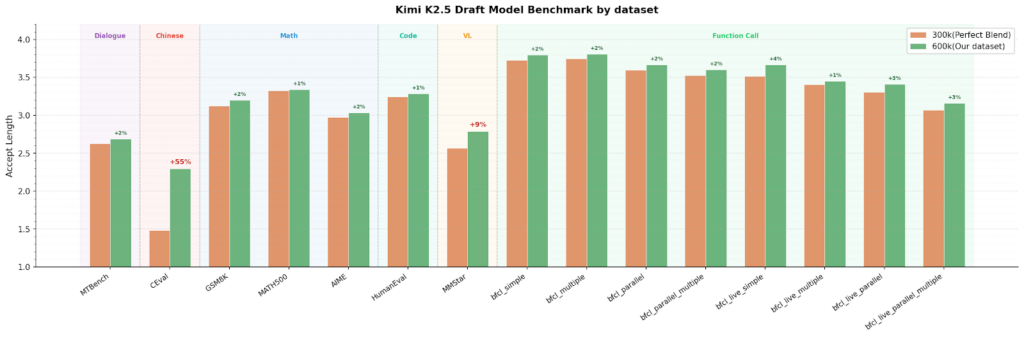

TorchSpec represents a significant advancement in addressing the scalability challenges of speculative decoding training. The strongest version of this narrative highlights its innovative disaggregated architecture, which eliminates storage bottlenecks and enables independent scaling of inference and training resources. By leveraging Mooncake’s high-performance data transfer capabilities, TorchSpec achieves near-line-rate transfers and zero-copy efficiency, making it a robust solution for traini...