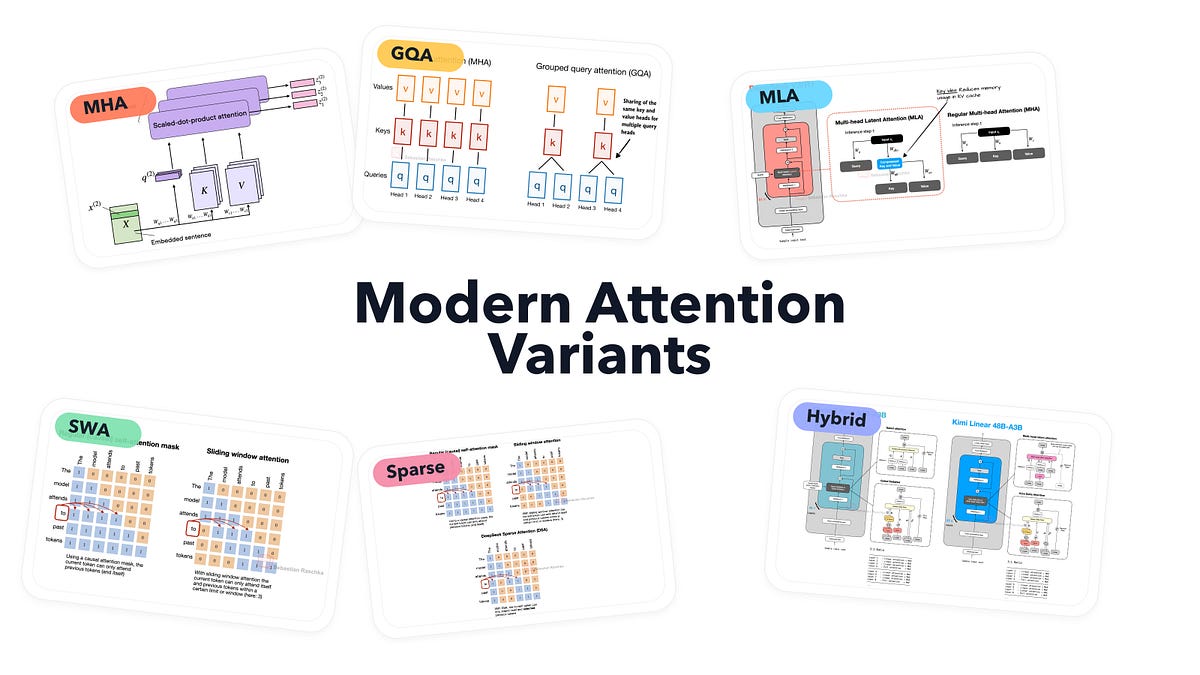

A Visual Guide to Attention Variants in Modern LLMs

From MHA and GQA to MLA, sparse attention, and hybrid architectures

I had originally planned to write about DeepSeek V4. Since it still hasn’t been released, I used the time to work on something that had been on my list for a while, namely, collecting, organizing, and refining the different LLM architectures I have covered over the past few years...

The article presents a detailed and educational overview of attention mechanisms in modern LLMs, serving as a valuable resource for understanding the evolution and trade-offs of these architectures. It effectively steelmans the narrative by providing clear explanations and examples of various attention variants, acknowledging their strengths and limitations. The piece avoids emotional exploitation or distortion, focusing instead on factual presentation and balanced analysis.

One key pattern dete...