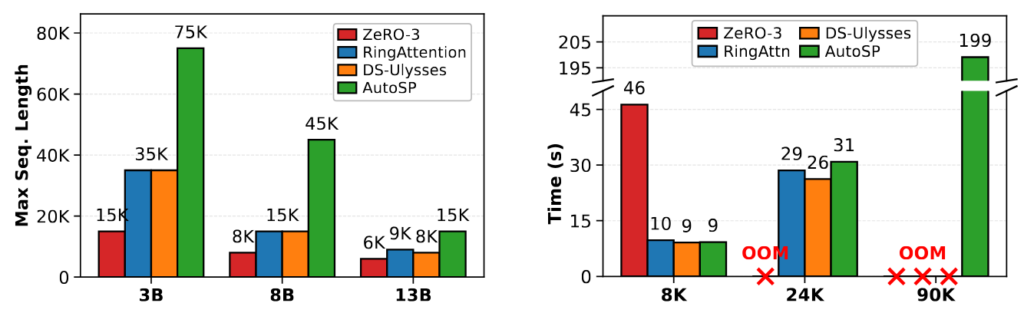

Increasingly, Large-Language-Models (LLMs) are being trained for extremely long-context tasks, where token counts can exceed 100k+. At these token counts, out-of-memory (OOM) issues start to surface, even when scaling device counts using conventional training techniques such as ZeRO/FSDP. To circumvent these issues, sequence parallelism (SP): partitioning the input tokens across devices to enable ...

AutoSP demonstrates a pattern of simplifying complex technical processes for researchers in the field of LLMs. By automating the implementation of sequence parallelism, it eliminates the need for invasive code changes and reduces the complexity associated with long-context training. This could potentially democratize access to such capabilities, making it easier for a broader range of researchers to experiment with long-context models. However, it's important to consider potential challenges rel...