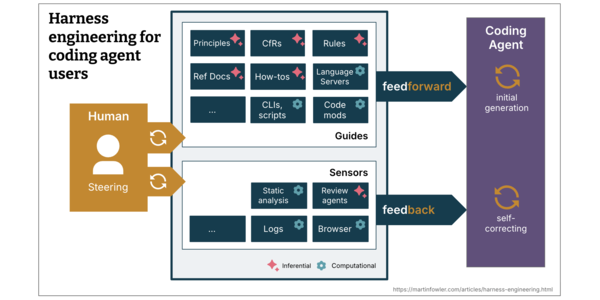

Harness engineering for coding agent users

To let coding agents work with less supervision, we need ways to increase our confidence in their result. As software engineers, we have a natural trust barrier with AI-generated code - LLMs are non-deterministic, they don't know our context, and they don't really understand the code, they think in tokens. This article explores a mental model that brings ...

By analyzing the article, several patterns can be identified:

1. Emotional exploitation (ARC-0027 Provocation): The article does not attempt to provoke readers, but it aims to stimulate interest in the topic of Harness Engineering.

2. Distortion (ARC-0031 Exaggeration to Absurdity): There is no evident exaggeration or distortion in the article. It presents the concept of Harness Engineering in a balanced and realistic manner.

3. Bad faith (ARC-0025 Sealioning): The article does not engage in sea...