Getting distributed training jobs to run on huge clusters is hard! This is especially true when you start looking at more complex setups like distributed reinforcement learning. Debugging these kinds of jobs is frustrating, and the turnaround time for changes tends to be very slow.

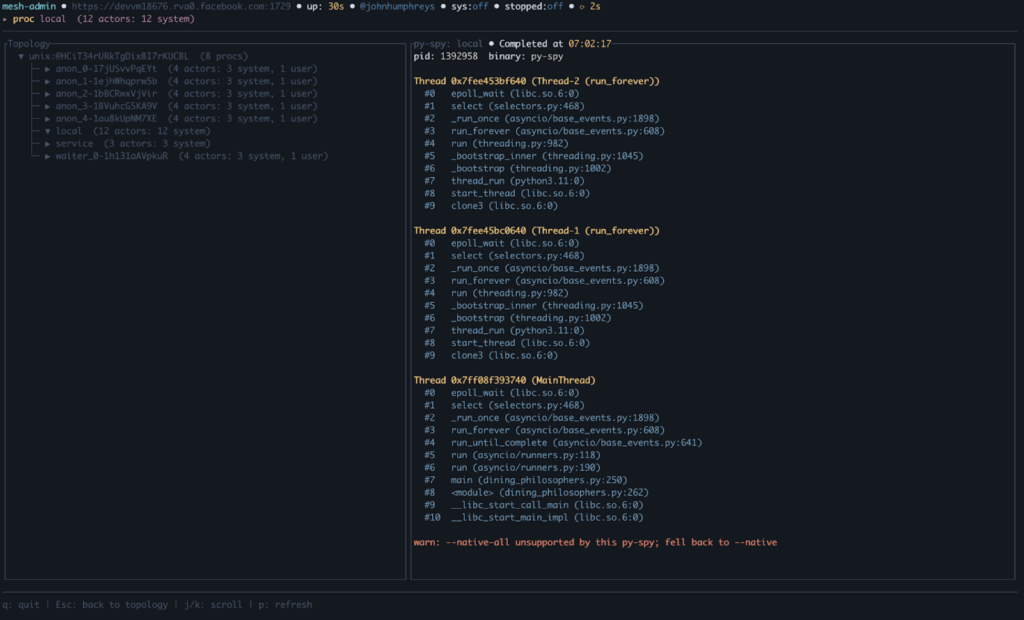

Monarch is a distributed programming framework for PyTorch that makes the cluster programmable through a simple Pytho...

Monarch presents itself as a revolutionary tool for distributed AI training, promising to democratize supercomputing by making clusters as accessible as local development environments. The strongest version of this narrative is compelling: by abstracting away the complexities of distributed systems, Monarch could significantly reduce the barrier to entry for large-scale AI research, enabling faster iteration and more efficient debugging. The framework’s focus on agentic development—where AI agen...